The Internet’s New Illusion

If you’ve been online recently, you’ve probably seen some strange clips; a bear bouncing on a trampoline, a monkey wrestling a crocodile, or animals behaving like they’re in a cartoon. They look real. They sound real. But they’re not.

Many of these viral videos were created using AI video generators such as Sora, Runway, and Kling. What began as a fun display of technology’s progress has quickly become something more profound: a glimpse into a future where it’s harder than ever to tell what’s real and what’s artificial.

In most cases, these videos are harmless. A dancing bear won’t hurt anyone. But the same tools can also generate fake news footage, false evidence, or realistic hoaxes. And that’s where AI stops being entertainment, and becomes a serious problem.

The Rise of Hyperreal AI Video

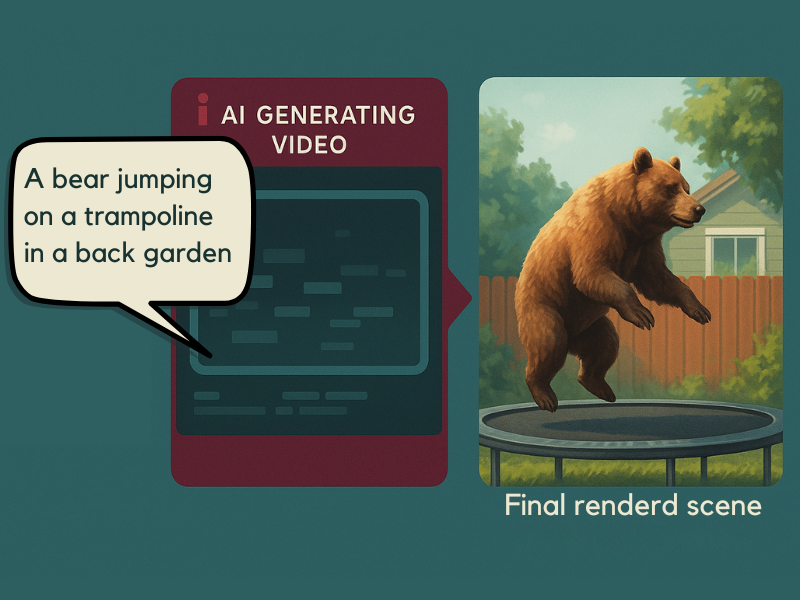

Modern AI tools can now create entire scenes from short text prompts. You can type:

“A bear jumping on a trampoline in a back garden”, and within seconds, the model produces footage that looks like it was filmed on a real camera.

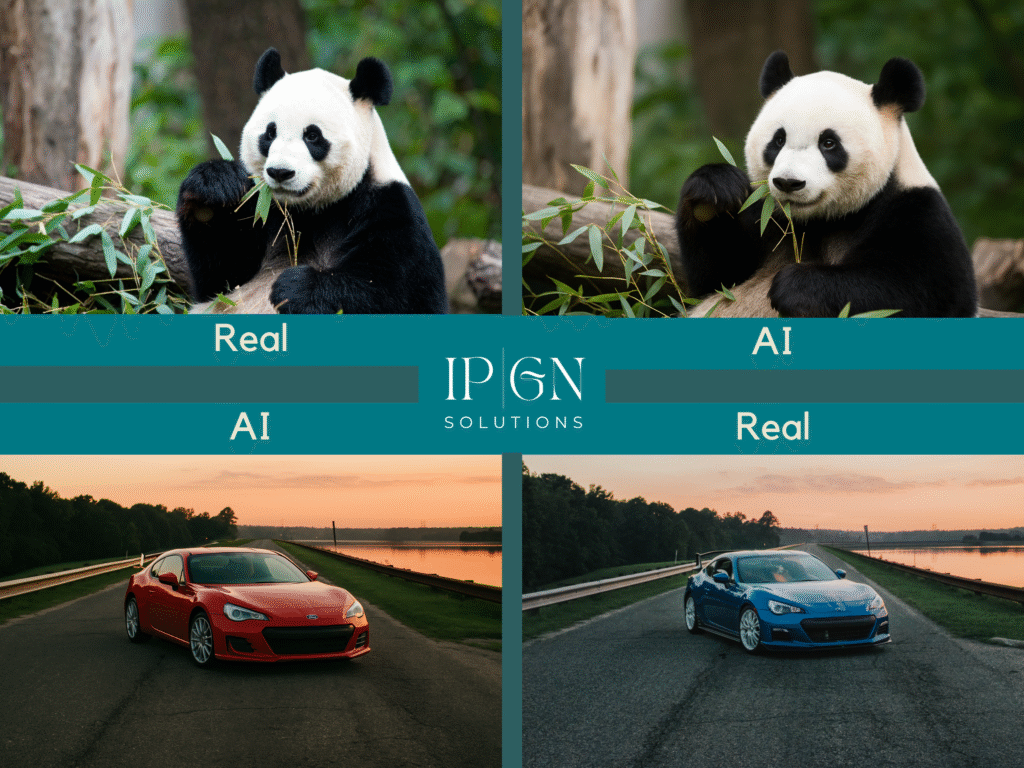

These systems have learned how light behaves, how fur moves, and how shadows fall. Trained on vast libraries of real-world video, their results can look almost indistinguishable from genuine footage.

Just a year ago, AI videos looked warped and uncanny, characters with too many fingers, glitching backgrounds, and puppet-like movement. Today, they’re polished enough to fool most viewers at first glance.

That progress is both exciting and alarming.

Why It Matters

AI-generated video isn’t inherently bad. Many creators use it for art, storytelling, and innovation. But the same capability that fuels creativity can also power deception.

Imagine a fake clip showing a public figure saying something they never said, or a fabricated video of an event that never happened. It doesn’t take long for misinformation to spread, and by the time it’s debunked, the damage is already done.

As AI video generation becomes more accessible, the line between creativity and manipulation blurs. The challenge isn’t just identifying fakes, it’s preserving trust in what we see.

How to Spot an AI-Generated Video

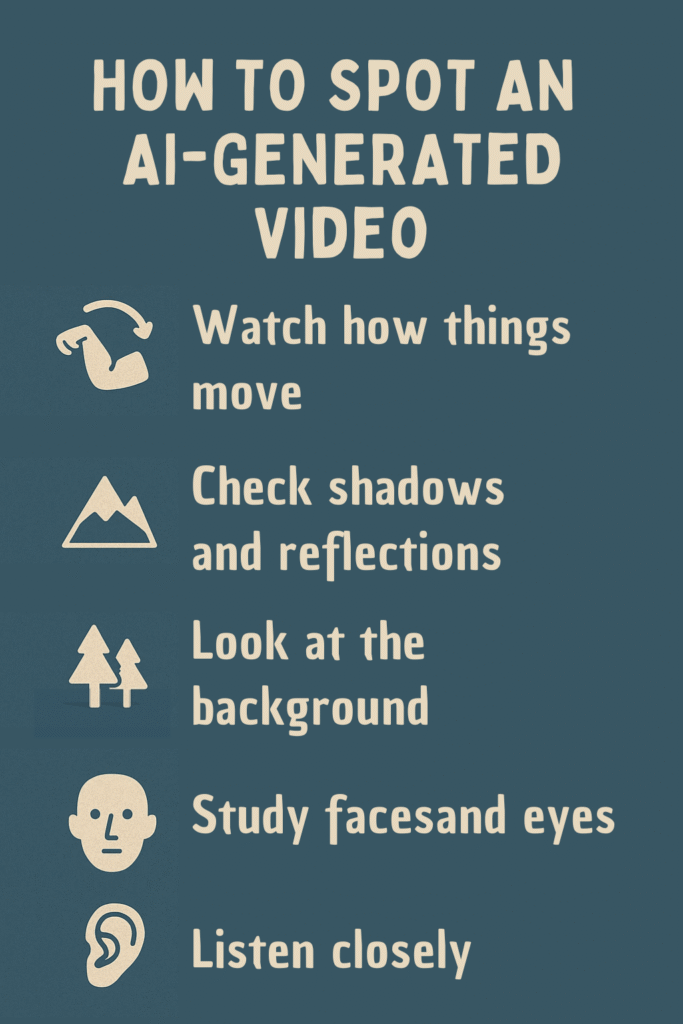

Even the most convincing AI clips leave subtle clues. Here are five key signs to look for when you’re unsure whether a video is real or synthetic:

1. Watch How Things Move

AI still struggles with realistic motion. Limbs might bend unnaturally, heads glide too smoothly, or objects pass through each other. If something looks slightly off, it probably is.

2. Check Shadows and Reflections

Lighting in AI videos often behaves incorrectly. Reflections might not match what’s in front of them. Shadows can flicker, change direction, or appear in impossible places.

3. Look at the Background

AI tends to focus on the main subject while neglecting detail behind it. Backgrounds can blur, duplicate, or flicker – signs, posters, and people sometimes distort for just a few frames.

4. Study Faces and Eyes

Facial animation remains one of AI’s hardest challenges. Watch for strange blinks, mismatched gaze, or smiles that freeze unnaturally. A still frame might look perfect, but motion often exposes the illusion.

5. Listen Closely

AI-generated sound can reveal the truth. Voices may sound too clean or slightly out of sync with lip movement. Background noise often loops or lacks the subtle imperfections that make real audio feel alive.

If you’re still uncertain:

- Run a reverse image or video search to see if the clip exists elsewhere.

- Check the uploader’s credibility and posting history.

- Look for confirmation from reputable journalists or fact-checking sites.

If no trusted sources are reporting it, pause before sharing.

When Seeing Is No Longer Believing

For years, we’ve treated video as the ultimate proof, “I saw it with my own eyes“. That phrase doesn’t hold the same weight anymore.

AI has changed how we define evidence. It’s no longer safe to assume that visual realism equals truth. That doesn’t mean we should panic or distrust everything online. It means we must adapt.

In the age of hyperreal AI, critical thinking becomes our most valuable skill. Before believing or reposting a video, pause and ask:

Who made this? Why was it made? And how was it created?

The Good Side of AI Creativity

AI itself isn’t the enemy. Like any technology, it reflects the intent of the people using it.

AI-generated video holds extraordinary potential for education, marketing, film, and design. It allows small businesses to create professional-quality visuals without expensive production costs. It can visualise ideas that were once impossible to capture.

But with great power comes responsibility, from both creators and viewers.

- Creators must be transparent when content is AI-generated.

- Viewers must stay informed and curious.

Together, these habits build a more trustworthy internet.

The Next Frontier of Digital Awareness

The coming years will redefine truth online. Recently, Elon Musk claimed that “Grok will make a watchable movie before the end of next year — and really good movies in 2027.” That trajectory shows how rapidly AI video will evolve. Detection tools will help, but awareness will remain our best defence.

Knowing what to look for, asking questions, and refusing to take everything at face value will matter more than ever.

At IPGN Solutions, we focus on helping organisations use AI responsibly, combining automation with awareness. We’ve seen first-hand how the right AI systems can make work smarter, faster, and safer. But we also understand that innovation without integrity can erode trust just as quickly as it builds it.

AI is here to stay, and that’s a good thing. We just need to use it with care, clarity, and common sense.

Stay Smart, Stay Curious

The next time you see a bear jumping on a trampoline or a monkey taking on a crocodile, enjoy the creativity, but keep your critical eye switched on.

In a world where AI can generate almost anything, human awareness is still the most powerful tool we have.